The Minimum Digital Kernel of an Unbundled State

From Transactions to Decisions: The Governance Layer of Digital Public Infrastructure

TLDR;

Government services often fail in a quiet way. You apply for a benefit, get rejected, and no one can clearly explain: Which rule was used? Which record was checked? Who is responsible? How do you fix it?

In countries like India, this problem is massive. Hundreds of millions of people move across districts and states for work. Addresses change. Records don’t match. Climate shocks displace families. Courts are overloaded with millions of pending cases, many linked to administrative decisions that could have been resolved earlier if the system clearly showed what went wrong. When decisions are opaque, vulnerable people get excluded, and fixing errors becomes slow, costly, and dependent on middlemen.

The Minimum Digital Kernel is a small shared backbone that makes every decision explainable and fixable, even when services are delivered through many apps, offices, or intermediaries. Its key output is a “decision receipt” - a signed decision trace - that shows the exact rule used, the data checked, the reason for the outcome, and which certified authority made the decision. It cannot be quietly changed.

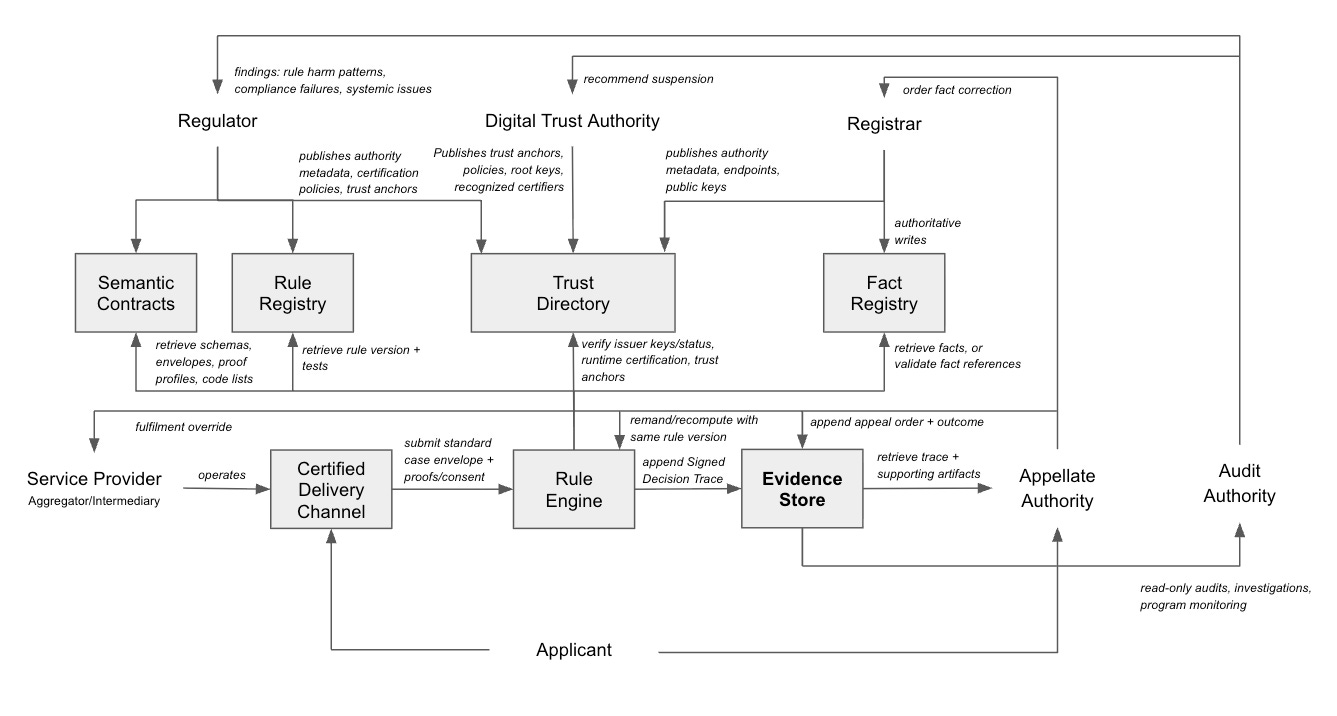

To make this possible, the system needs a few shared parts: a public list of who is authorised to act, clear and versioned digital rulebooks, official registries that own and correct facts, a common decision service that applies rules consistently, a secure evidence store, an independent appeals authority that can order corrections and re-checks, and an audit authority that spots systemic problems.

This becomes even more critical as AI spreads through government systems. AI can process thousands of applications in seconds. But without clear rules, traceable decisions, and enforceable remedies, AI will simply scale confusion and exclusion. The kernel ensures that even when AI is used, every outcome is still tied to published rules, recorded with reasons, and open to appeal.

Digital identity, payments, and data exchange help people, money, and information move at scale. The Minimum Digital Kernel makes sure decisions move with accountability.

With this in place, “address mismatch” stops being a dead end. You see what failed, you know who must fix it, and the system is required to recompute the decision once the error is corrected. That is how digital government becomes fair, not just fast.

A citizen applies for a benefit through an app, gets rejected for “address mismatch,” and is told to visit an office. The office says the address is wrong in a registry maintained by another department. The citizen goes there, is asked for paper proofs, and is told the correction will take weeks. Meanwhile, a local agent offers to “fix it” for a fee. When the citizen asks why the system rejected them, no one can show which rule was applied, which data was used, or who is responsible for correcting what.

This is the quiet failure mode of digitization at scale. Not lack of policy. Lack of a verifiable chain from authority to facts to decisions to remedy.

In the previous essay, I argued that the state can be unbundled: not dismantled, but modularized. The point is to separate functions that should not be vertically integrated inside the same institution, especially when doing so creates opacity, discretionary power, and brittle service delivery. But institutional unbundling only works if there is a thin shared digital waist that keeps the system coherent while allowing plural delivery at the edges.

I call it the Minimum Digital Kernel. It is the smallest set of shared digital capabilities needed for an unbundled state to behave like a rule-of-law machine: legitimacy is legible, decisions are reproducible, and remedies are enforceable. Everything else, the apps, counters, intermediaries, and fulfilment channels, can remain plural.

The kernel’s primary artifact is the signed decision trace: a portable, appeal-ready proof of what happened. If you design for the trace, the rest of the kernel becomes obvious. You need to know who was authorized, which rule version ran, what facts were relied on, what checks were performed, and what reason codes were produced. You need the trace to be verifiable and durable. And you need a remedy path that can change outcomes and correct the underlying world.

Digital Public Infrastructure has focused on enabling the flow of people (identity), money (payments), and data (consented exchange) at scale. The Minimum Digital Kernel extends this idea to the flow of decisions. It introduces the shared governance primitives that make rule application traceable, appealable, and enforceable in an unbundled ecosystem. In that sense, it is not separate from DPI, but its next logical layer: governance infrastructure for decision-heavy public services.

Here are the kernel primitives that make the decision trace possible.

Trust Authority and Trust Directory of Authority: make legitimacy machine-verifiable

In the physical state, legitimacy is expressed through seals, letterheads, and office locations. In a digital ecosystem, legitimacy must be expressed through discoverable metadata and cryptographic trust. The Directory is the public map of authority: who the regulators are, who the registrars are, which service providers and intermediaries are certified, what roles they play, where their endpoints are, and which public keys should be used to verify them.

To avoid turning this into a new central choke point, it helps to separate the mechanism from its governance. The Directory is a publication and discovery layer. The authority to certify participants, define acceptable cryptographic suites, set revocation expectations, and handle key compromise sits with designated trust governance, typically exercised by regulators (or a formally designated trust authority) under published policies and oversight. The point is not to create one super-ministry of trust. The point is to make trust explicit, machine-checkable, and auditable, so no participant is “trusted by assumption.”

This does two things. It prevents illegitimate channels from masquerading as the state, and it prevents interoperability from degenerating into private integrations. Participants can discover the authoritative source for rules and facts, and verifiers can check credentials and decision traces against published keys, certification status, and trust anchors. When certification is withdrawn or keys are rotated, those changes are published, time-stamped, and reviewable, so trust can be updated quickly without becoming arbitrary.

Rules Registry: publish versioned, testable rulebooks

Most service delivery failure is not because policy is unclear. It is because policy gets reinterpreted at every desk, counter, and software screen. In an unbundled state, regulators must publish rules as a versioned, machine-readable rulebook. Not merely a PDF notification, but executable logic with reason codes and tests.

Versioning is non-negotiable. Any decision trace must bind to a specific rule version so accountability survives rule change. Appellate review should be able to ask, “Was the correct rule version applied at the time, and was it applied correctly?” not “What does the rule say today?”

Semantic Contracts Registry: standardize meaning, proofs, and envelopes

Rules alone are not enough. Interoperability fails less on logic and more on semantics and formats. If different issuers interpret “residency” differently, verification becomes political. If different providers submit different request structures, every integration becomes bespoke. So the kernel must include a public, versioned semantic contract layer.

The contract layer has three parts. Schemas define meaning: canonical definitions for facts, outcomes, and reason codes, including code lists and units. Profiles define proofs: how credentials must be presented and verified, what cryptographic suites are supported, what issuer checks are required (via the trust directory), and what revocation expectations exist (under trust authority policy). Envelopes define exchange: the standard case package format sent to the decisioning utility and the standard decision trace format returned. Proof profiles should explicitly specify how identity assurance is established (for example, through an e-ID or identity provider credential), and how consented data access is recorded when a data exchange rail is used.

Conformance here should be concrete. A provider is not certified because a committee says so. They are certified because, given a published test case package, they produce the published expected decision and trace. Certification can be continuous, not annual.

Fact Registry and Registrars: separate truth ownership from delivery

Every state has facts that determine eligibility and obligation. The issue is that facts are often inaccessible, unverifiable, and uncorrectable in practice. In the Minimum Digital Kernel, registrars remain the institutional owners of facts. They have the authority and duty to update and correct, with audit.

The Fact Registry is the substrate for those facts, as a system of record. The ecosystem can consume facts without rewriting them, and corrections happen only through the registrar. This prevents the proliferation of shadow databases that emerge when delivery actors have no reliable way to reference authoritative facts.

In early phases, facts can be accessed through APIs or presented as verifiable credentials issued by registrars. High-capacity environments may begin with APIs and move toward credentials for privacy and portability. Low-capacity environments may do the reverse: start with credentials to reduce integration and uptime burdens, and add APIs later where real-time queries are necessary

Shared Certified Rules Engine: coherence as a utility, not a monopoly portal

Many digitisation efforts force consistency through a single portal or a single monolithic system. That yields uniformity, but it constrains innovation, resilience, and inclusion. The kernel takes a different approach: decisioning becomes a shared utility that many channels can call.

A Shared Certified Rules Engine accepts a standardised case package, validates it against published schemas and proof profiles, retrieves the relevant rule version, verifies any credentials and issuer keys against the Trust Directory (under Trust Authority policy), evaluates the rules using certified runtimes, and returns a decision plus reason codes. Where a program relies on DPI rails, the engine’s trace should record identity assurance checks and any consented data pulls, just as it records rule versions and fact references.

The crucial output is the signed decision trace. At minimum, it binds the rule version applied, references to facts used (or presented proofs), verification checks performed, reason codes and intermediate determinations, timestamps, and the signer identity of the certified engine/runtime.

This is what makes plural delivery safe. Many channels can compete on intake and fulfilment, but eligibility and decisioning remain consistent and auditable.

A common objection is that this sounds like centralisation. The answer is governance and interface discipline. The decisioning utility is shared, but it can be operated as a utility with published conformance suites, transparent versioning, public SLAs, and independent oversight. Over time, provider-hosted certified engines can be permitted, but one invariant remains: the decision trace format and verification requirements stay mandatory, and certification stays enforceable via the trust directory.

Evidence Store: accountability by default

Accountability should not be a paperwork process. It should be structural. The Evidence Store is append-only and tamper-evident, and it stores signed decision traces plus the minimum supporting artefacts needed to prove what happened. Oversight bodies should not need to reconstruct cases from screenshots and affidavits.

This layer enables ex post accountability, including audits, investigations, and court review, but it also enables ex ante learning: which rules cause mass rejections, which registries generate disputes, which intermediaries create errors, and where citizen burden accumulates.

Privacy must be a hard invariant, not a footnote. Where feasible, the store should retain references, hashes, and minimum disclosure proofs rather than raw personal data. Raw case material should be stored only when legally necessary for adjudication, with strict retention and access controls. Evidence used for adjudication must be separated from aggregated analytics used for program improvement. Every access to evidence should itself be logged, so oversight can audit not only decisions but also who looked at which citizen’s case and why.

Appellate Authority: contestation with power to change outcomes

Automation without remedy is tyranny disguised as efficiency. The appellate function in the kernel is not a customer support inbox. It is a structured adjudication capability that starts from the signed decision trace rather than forcing the citizen to re-litigate the entire case.

The Appellate Authority should be able to validate which rule version was applied, whether required verification steps in the published proof profile were followed, whether the signer and runtime were certified at the time of execution, and whether the fact references were legitimate. Then it must have the power to order remedy, not merely review.

Remedy should propagate in four directions. It can uphold, override, or remand a decision and trigger recomputation, binding the case to the same rule version that applied at the time. It can order correction of a fact at the registrar, and only the registrar performs the authoritative write to the Fact Registry, preserving truth ownership and auditability. It can flag rule ambiguity or demonstrated harm to the regulator, backed by evidence rather than anecdotes. And it can trigger sanctions, suspension, or decertification actions for process violations through the relevant certification authority, so enforcement is real but procedurally constrained.

A signed trace proves what happened. It does not, by itself, prove that the outcome is just. That is why an appellate function with human judgment, constrained by trace evidence and bound to published rules, is part of the minimum kernel rather than an optional add-on.

Audit Authority: systemic oversight without re-litigating cases

The Appellate Authority correct individual outcomes. Audit detects systemic failure modes. An Audit Authority should have read-only access to the evidence store, and access to trust metadata and certification status in the trust directory. It can identify rule harm patterns, compliance failures, and systemic issues, and recommend corrective actions, including suspension or decertification through the relevant certifying authorities.

Wallet: citizen-held proofs and consent, as an adoption accelerator

The wallet is not the kernel’s starting point, but it is the kernel’s scaling path. Credentials issued by registrars can land in the citizen’s wallet. When applying for a service, the citizen can present proofs via consented sharing using standard proof profiles. Outcomes and orders, including appeal orders, can also be issued as durable artifacts.

This reduces paperwork, but more importantly it shifts power: citizens carry portable proofs, while registrars remain accountable for the underlying facts.

How a decision flows

A citizen applies through any certified provider or intermediary operating a certified delivery channel. That channel authenticates the applicant using the prevailing identity mechanism in that context (which may be as simple as a phone number or as strong as a national digital ID), packages the application into the standard case envelope, and requests consent to present required proofs or to pull required facts via a data exchange rail where available. The channel calls the Shared Certified Rules Engine. The engine validates the case package against schemas, verifies proofs against the published profile, verifies issuer keys and runtime certification via the trust directory, retrieves the relevant rule version, evaluates eligibility, and returns a decision plus reason codes and a signed decision trace.

The trace is logged automatically in the Evidence Store. If the decision results in action, fulfilment happens through the delivery channel. If the citizen disputes the outcome, they appeal using the trace as the starting point. The appellate authority can uphold, override, remand, or correct, and can order remedies that trigger registrar correction and recomputation, or sanction process violations. Audit consumes evidence to detect systemic patterns without re-running every case.

What is truly “kernel,” and what is ecosystem

To keep the “minimum” honest, it helps to separate what is mandatory from what can be phased. Mandatory on day one is the Trust Authority and Trust Directory of Authority, versioned Rules and Semantic Contracts (at least schemas and envelopes), a certified decisioning utility that emits signed decision traces, an evidence store, an appellate authority that consumes traces and can order remedies that propagate through registrars and recomputation, and an audit authority for systemic oversight.

Phaseable over time is expanding coverage and sophistication: broader credentialization of facts, selective disclosure proofs, wallets at scale where appropriate, and deeper API integration where real-time verification is needed. Credential mode requires manageable issuance and revocation (or short-lived credentials) to avoid stale proofs.

Sequencing: how to build without creating a new monolith

Start with one program where rule-heavy decisions create high citizen friction. Publish the rules, schemas, envelopes, and reason codes. Stand up the certified decisioning utility and mandate signed decision traces. Introduce the evidence store so every decision is reviewable by default. Formalize an appellate authority with technical power to order remedies that propagate to registrars and recomputation, and establish audit access for systemic monitoring.

Once the kernel is stable, open certification for multiple delivery channels early: service centers, NGOs, fintechs, private apps, local intermediaries, and departmental counters. That is when unbundling becomes real.

Closing the loop: why the citizen’s “address mismatch” story ends differently

Return to the citizen who was rejected for “address mismatch.” In the Minimum Digital Kernel, the rejection cannot be a dead end message on a screen. The provider must return a decision trace in a standard format. The citizen can see the rule version applied, the precise reason code, and which fact reference triggered the mismatch. There is no mystery about whether the problem is policy, data, or process.

If the mismatch is real, the trace points to the authoritative registrar for address, because the trust directory makes truth ownership legible. The citizen does not have to guess which office to visit or rely on an agent. They can initiate a correction request against the registrar, and that correction is auditable because registrars are accountable for updates to the Fact Registry.

If the mismatch is not real, or the provider verified the proof incorrectly, the citizen appeals with the trace as evidence. The appellate authority does not restart the case from scratch. It validates whether the correct rule version ran, whether the credential verification steps in the published profile were followed, and whether the fact reference was legitimate. If the provider made an error, the remedy is clear: remand and recompute under the same rule version with the corrected verification, and, if needed, sanction the provider for process violations.

If the underlying fact is wrong, the remedy is also clear: the appellate authority can order the registrar to correct the fact, and once corrected, the system recomputes the decision. The citizen is not trapped in a loop of “come back later,” because the architecture has a built-in propagation path from correction to recomputation.

If the rule itself is ambiguous or harmful, that ambiguity becomes visible as a pattern in reason codes and appellate outcomes, and in audit findings. The regulator does not learn about failures through newspapers or anecdotes. It learns through the evidence layer. The next rule version can be improved, and future traces bind to that version. Accountability survives change.

Most importantly, the local agent’s business model collapses. Not because we outlaw agents, but because the system no longer requires informal navigation. Authority is discoverable, decisions are explainable, and remedies are executable.

That is the promise of the Minimum Digital Kernel. Citizens still interact with many channels, public and private, but they do not depend on discretion, informal power, or opaque software outcomes. When something goes wrong, the system can prove what happened and has a mechanism to fix it.

Why is this important now

In India and many developing countries, the stakes for defendable, traceable decisions are exceptionally high. Internal migration is already reshaping cities at enormous scale, and credible estimates put the stock of internal migrants in the hundreds of millions, with some recent analyses citing figures above 600 million. Climate change is likely to amplify this churn, including through climate-related displacement and internal climate migration over coming decades, with projections for South Asia in the tens of millions by 2050 under multiple scenarios.

Eligibility systems for benefits, housing, rations, and relief will therefore face unprecedented strain: address changes, residency proofs, and fact corrections routinely fail silently, trapping vulnerable people in loops of exclusion or forcing reliance on informal agents. Meanwhile, India’s courts carry a massive pending-case load, and NJDG data shows district and subordinate courts alone have roughly 48.6 million pending cases, many of which originate in, or are aggravated by, opaque administrative decisions that could be resolved upstream if traces showed exactly what rule ran, which fact was used, and how to propagate a fix.

The Minimum Digital Kernel addresses these pressures head-on: by making decisions verifiable and remedies enforceable at scale, it reduces mass exclusions, shifts resolution upstream through administrative appeals and auditable correction paths, and builds resilience against future shocks, turning DPI’s foundational rails (identity, payments, data exchange) into an accountable governance layer for equitable, adaptive service delivery in fast-changing societies.

Why this becomes critical in the age of AI diffusion

As AI becomes embedded in eligibility screening, fraud detection, risk scoring, prioritization, and grievance triage, the scale and speed of administrative decision-making will increase dramatically. That is the promise, and the danger. Without structural safeguards, opacity scales with automation. A model can deny or downgrade thousands of cases in seconds, but if there is no signed decision trace binding the outcome to a specific rule version, the facts or proofs relied on, the verification checks performed, the reason codes produced, and the accountable authority, contestation collapses into guesswork.

The Minimum Digital Kernel does not try to “regulate the model” as an object. It regulates the decision as a legal act. It forces model-assisted judgments to pass through a traceable decision envelope: the model may propose a classification or risk signal, but the outcome must still be decided under published rules, expressed in standard reason codes, executed by certified runtimes, and recorded in an audit-grade evidence store. The trace must also capture the model’s role in the decision, at least as a declared input (for example, “risk score used,” model/version, and threshold applied), so appellate and audit authorities can see when and how automation influenced outcomes.

This is what makes responsible diffusion possible. Governments will adopt AI unevenly across programs, states, and vendors. The kernel provides the invariant interface that prevents that diffusion from becoming discretionary chaos. It allows innovation at the edges, including new models and new delivery channels, while preserving due process at the core: legible authority, reproducible decisions, and remedies that can actually change outcomes.

Thank you for sharing this clear articulations of the governance layer problem. It's such a massive task - I just would like to add some points to think about as this get's developed further.

On the Trust Authority: the piece separates the Directory (publication layer) from trust governance (certification, revocation, policy), which feels right. But the constitutional question remains open - who creates the Trust Authority, under what legal instrument, and how are its decisions challenged? Without constitutional anchoring, there is no judicial pathway into the authority's decisions.

On registrar enforcement: the kernel assumes registrars will execute correction orders from the Appellate Authority. But the design doesn't specify deadlines, penalties for non-compliance, or compensation if delay causes harm. Without timelines and penalties, remedy propagation collapses.

On AI thresholds: the piece rightly says the trace must capture model version and threshold applied. But logging which threshold was used is not the same as justifying it. An audit trail that proves a threshold was applied doesn't establish that it was fair or proportionate. Without publication and review, accountability shifts into configuration space.

On fact provenance: the model seems to be working well for stable, authority-held facts. It's less clear how it handles facts generated by sensors, satellites, or computational models - data that can change and can be wrong in ways that aren't legible from the output alone. For those cases, the trace needs source, model version, and confidence level alongside the dispute path. Without uncertainty metadata, traceability does not equal epistemic reliability.

None of these are reasons to pull back from the proposal - they're the next layer of detail to make it more operationally credible.

Very interesting and recapitulates a point central to my own https://foundationsofthedigitalstate.com/ - there is the paradox that decentralisation that requires a kernel of centralism. This proposal also requires a fundamental law reform - as the various processes that being contested will usually have their appeals processes and bodies defined in law - and so going to implementation will require re-aligning all those entities to use the proof format and to, if necessary, merge and reorganise them as appropriate.